Recently, I got a Steam Deck OLED. Obviously, one of the main reasons for that is to run a certain yet to be announced here emulation app on it, so I installed Bazzite instead of SteamOS, cleaned up the preinstalled junk and got a clean desktop along with the Steam session/gaming mode.

For the most part, it just works (in desktop mode, at least), but there was one problematic area: input.

Gamepad input

Gamepads in general are difficult. While you can write generic evdev code dealing with, say, keyboard input and be reasonably sure it will work with at least the majority of keyboards, that’s not the case for gamepads. Buttons will use random input codes. Gamepads will assign different input types for the same control. (for example, D-pad can be presented as 4 buttons, 2 hat axes or 2 absolute axes). Linux kernel includes specialized hid drivers for some gamepads which will work reasonably well out of the box, but in general all bets are off.

Projects like SDL have gamepad mapping databases – normalizing input for all gamepads into a standardized list of inputs.

However, even that doesn’t guarantee they will work. Gamepads will pretend to be other gamepads (for example, it’s very common to emulate an Xbox gamepad) and will use incorrect mapping as a result. Some gamepads will even use identical IDs and provide physically different sets of buttons, meaning there’s no way to map both at the same time.

As such, apps have to expect that gamepad may or may not work correctly and user may or may not need to remap their gamepad.

Steam controllers

Both the standalone Steam Controller and Steam Deck’s internal gamepad pose a unique challenge: in addition to being gamepads with every problem mentioned above, they also emulate keyboard and pointer input. To make things more complicated, Steam has a built-in userspace HID driver for these controllers, with subtly different behavior between it and the Linux kernel driver. SteamOS and Bazzite both autostart Steam in background in desktop mode.

If one tries to use evdev in a generic way, same as for other gamepads, the results will not be pretty:

In desktop mode Steam emulates a virtual XInput (Xbox) gamepad. This gamepad works fine, except it lacks access to Steam and QAM buttons, as well as the 4 back buttons (L4, L5, R4, R5). This works perfectly fine for most games, but fails for emulators where in addition to the in-game controls you need a button to exit the game/open menu.

It also provides 2 action sets: Desktop and Gamepad. In desktop action set none of the gamepad buttons will even act like gamepad buttons, and instead will emulate keyboard and mouse. D-pad will act as arrow keys, A button will be Enter, B button will be Esc and so on. This is called “lizard mode” for some reason, and on Steam Deck is toggled by holding the Menu (Start) button. Once you switch to gamepad action set, gamepad buttons will act as a gamepad, with the caveat mentioned above.

Gamepad action set also makes the left touchpad behave differently: instead of scrolling and performing a middle click on press, it does a right click on press while moving finger on it does nothing.

hid-steam

Linux kernel includes a driver for these controllers, called hid-steam, so you don’t have to be running Steam for it to work. While it does most of the same things Steam’s userspace driver does, it’s not identical.

Lizard mode is similar, the only difference is that haptic feedback on the right touchpad stops right after lifting finger instead of after the cursor stops, while left touchpad scrolls with a different speed and does nothing on press.

The gamepad device is different tho – it’s now called “Steam Deck” instead of “Microsoft X-Box 360 pad 0” and this time every button is available, in addition to touchpads – presented as a hat and a button each (tho there’s no feedback when pressing).

The catch? It disables touchpads’ pointer input.

The driver was based on Steam Deck HID code from SDL, and in SDL it made sense – it’s made for (usually fullscreen) games, if you’re playing it with a gamepad, you don’t need a pointer anyway. It makes less sense in emulators or otherwise desktop apps tho. It would be really nice if we could have gamepad input AND touchpads. Ideally automatically, without needing to toggle modes manually.

libmanette

libmanette is the GNOME gamepad library, originally split from gnome-games. It’s very simple and basically acts as a wrapper around evdev and SDL mappings database, and has API for mapping gamepads from apps.

So, I decided to add support for Steam deck properly. This essentially means writing our own HID driver.

Steam udev rules

First, hidraw access is currently blocked by default and you need an udev rule to allow it. This is what the well known Steam udev rules do for Valve devices as well as a bunch of other well known gamepads.

There are a few interesting developments in kernel, logind and xdg-desktop-portal, so we may have easier access to these devices in future, but for now we need udev rules. That said, it’s pretty safe to assume that if you have a Steam Controller or Steam Deck, you already have those rules installed.

Writing a HID driver

Finally, we get to the main part of the article, everything before this was introduction.

We need to do a few things:

1. Disable lizard mode on startup

2. Keep disabling it every now and then, so that it doesn’t get reenabled (this is unfortunately necessary and SDL does the same thing)

3. Handle input ourselves

4. Handle rumble

Both SDL and hid-steam will be excellent references for most of this, and we’ll be referring to them a lot.

For the actual HID calls, we’ll be using hidapi.

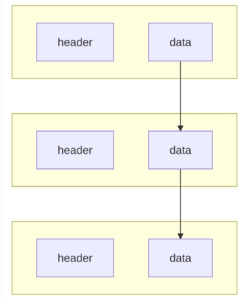

Before that, we need to find the device itself. Raw HID devices are exposed differently from evdev ones, as /dev/hidraw* instead of /dev/input/event*, so first libmanette needs to search for those (either using gudev, or monitoring /dev when in flatpak).

Since we’re doing this for a very specific gamepad, we don’t need to worry about filtering out other input devices – this is an allowlist, so we just don’t include those. So we just match by vendor ID and product ID. Steam Deck is 28DE:1205 (at least OLED, but as far as I can tell the PID is the same for LCD).

However, there are 3 devices like that: the gamepad itself, but also its emulated mouse and keyboard. Well, sort of. Only hid-steam uses those devices, Steam instead sends them via XTEST. Since that obviously doesn’t work on Wayland, there’s instead a uinput device provided by extest.

SDL code tells us that only the gamepad device can actually receive HID reports, so the right device is the one that allows to read from it.

Disabling lizard mode

Next, we need to disable lizard mode. SDL sends an ID_CLEAR_DIGITAL_MAPPINGS report to disable keyboard/mouse emulation, then changes a few settings: namely, disables touchpads. As mentioned above, hid-steam does the same thing – it was based on this code.

However, we don’t want to disable touchpads here.

What we want to do instead is to send a ID_LOAD_DEFAULT_SETTINGS feature report to reset settings changed by hid-steam, and then only disable scrolling for the left touchpad. We’ll make it right click instead, like Steam does.

This will keep the right touchpad moving pointer, but the previous ID_CLEAR_DIGITAL_MAPPINGS report had disabled touchpad clicking, so we also need to restore it. For that, we need to use the ID_SET_DIGITAL_MAPPINGS report. SDL does not have an existing struct for its payload (likely because of struct padding issues), so I had to figure it out myself. The structure is as follows, after the standard zero byte and the header:

- 8 bytes: buttons bitmask

- 1 byte: emulated device type

- 1 byte: a mouse button for

DEVICE_MOUSE, a keyboard key for DEVICE_KEYBOARD, etc. Note that the SDL MouseButtons struct starts from 0 while the IDs Steam Deck accepts start from 1, so MOUSE_BTN_LEFT should be 1, MOUSE_BTN_RIGHT should be 2 and so on.

Then the structure repeats, up to 6 times in the same report.

ID_GET_DIGITAL_MAPPINGS returns the same structure.

So, setting digital mappings for:

STEAM_DECK_LBUTTON_LEFT_PAD, DEVICE_MOUSE, MOUSE_BTN_RIGHTSTEAM_DECK_LBUTTON_RIGHT_PAD, DEVICE_MOUSE, MOUSE_BTN_LEFT

(with the mouse button enum fixed to start from 1 instead of 0)

reenables clicking. Now we have working touchpads even without Steam running, with the rest of gamepad working as a gamepad, automatically.

Keeping it disabled

We also need to periodically do this again to prevent hid-steam from reenabling it. SDL does it every 200 updates, so about every 800 ms (update rate is 4 ms), and the same rate works fine here. Note that SDL doesn’t reset the same settings as initially, but only SETTING_RIGHT_TRACKPAD_MODE. I don’t know why, and doing the same thing did not work for me, so I just use the same code as detailed above instead and it works fine. It does mean that clicks from touchpad presses are ended and immediately restarted every 800 ms, but it doesn’t seem to cause any issues in practice, even with e.g. drag-n-drop)

Handling gamepad input

This part was straightforward. Every 4 ms we poll the gamepad and receive the entire state in a single struct: buttons as a bitmask, stick coordinates, trigger values, but also touchpad coordinates, touchpad pressure, accelerometer and gyro.

Right now we only expose a subset of buttons, as well as stick coordinates. There are some very interesting values in the button mask though – for example whether sticks are currently being touched, and whether touchpads are currently being touched and/or pressed. We may expose that in future, e.g. having API to disable touchpads like SDL does and instead offer the raw coordinates and pressure. Or do things on touch and/or click. Or send haptic feedback. We’ll see.

libmanette event API is pretty clunky, but it wasn’t very difficult to wrap these values and send them out.

Rumble

For rumble we’re doing the same thing as SDL: sending an ID_TRIGGER_RUMBLE_CMD report. There are a few magic numbers involved, e.g. for the left and right gain values – originated presumably in SDL, copied into hid-steam and now into libmanette as well ^^

Skipping duplicate devices

The evdev device for Steam Deck is still there, as is the virtual gamepad if Steam is running. We want to skip both of them. Thankfully, that’s easily done via checking VID/PID: Steam virtual gamepad is 28DE:11FF, while the evdev device has the same PID as the hidraw one. So, now we only have the HID device.

Behavior

So, how does all of this work now?

When Steam is not running, libmanette will automatically switch to gamepad mode, and enable touchpads. Once the app exits, it will revert to how it was before.

When Steam is running, libmanette apps will see exactly the same gamepad instead of the emulated one. However, we cannot disable lizard mode automatically in this state, so you’ll have to hold Menu button, or you’ll get input from both the gamepad and keyboard. Since Steam doesn’t disable touchpads in gamepad mode, they will still work as expected, so the only caveat is needing to hold Menu button.

So, it’s not perfect, but it’s a big improvement from how it was before.

Mappings

Now that libmanette has bespoke code specifically for Steam Deck, there are a few more questions. This gamepad doesn’t use mappings, and apps can safely assume it has all the advertised controls and nothing else. They can also know exactly what it looks like. So, libmanette now has ManetteDeviceType enum, currently with 2 values: MANETTE_DEVICE_GENERIC for evdev devices, and MANETTE_DEVICE_STEAM_DECK, for Steam Deck. In future we’ll likely have more dedicated HID drivers and as such more device types. For now though, that’s it.

The code is here, though it’s not merged yet.

Big thanks to people who wrote SDL and the hid-steam driver – I would definitely not be able to do this without being able to reference them. ^^

got in the way and it took a while to finish this post. I finally found a little spare time to collect my thoughts and finish writing this.

got in the way and it took a while to finish this post. I finally found a little spare time to collect my thoughts and finish writing this.